Designing APIs and Applications for AI Agents: What CTOs Need to Know

Most software systems were built for a world where every API consumer was either a human developer or a predictable script. That world is ending. AI agents — autonomous software entities powered by large language models — are rapidly becoming the largest new consumer of your APIs, and they interact with systems in ways traditional design never anticipated.

For CTOs navigating ai agent development, the question is no longer whether AI agents will consume your APIs. The question is whether your APIs are ready to support them without breaking, leaking data, or burning through tokens on unnecessary retries. This guide distills the architectural shifts, protocol standards, and practical decisions you need to make your systems agent-ready in 2026.

Key Insight

AI agents don’t just call your APIs — they reason about them. Unlike traditional clients that execute predefined logic, agents interpret documentation, infer parameter usage, and adapt their behavior based on responses. This fundamentally changes what “good API design” means.

Why AI Agents Are Reshaping API Design

Traffic from AI-driven systems surged 49% in early 2025, according to Tollbit’s bot traffic report, and that number has only accelerated since. The shift is not theoretical. Companies like Stripe, Twilio, and Shopify have already launched dedicated Model Context Protocol servers to capture agent-driven traffic. Being first to make your API machine-legible is becoming a competitive advantage.

The reason this matters for CTOs: AI agents don’t browse documentation casually. They consume your API specifications, parse your error messages, and chain multiple endpoints together to complete complex goals. When your API is confusing to an agent, it doesn’t ask a colleague for help — it retries, hallucinates parameters, or abandons your service entirely. Every ambiguity in your API now has a direct token cost and a measurable impact on conversion.

This is why agentic ai architecture demands a fundamentally different design philosophy — one that optimizes for machine comprehension alongside human readability.

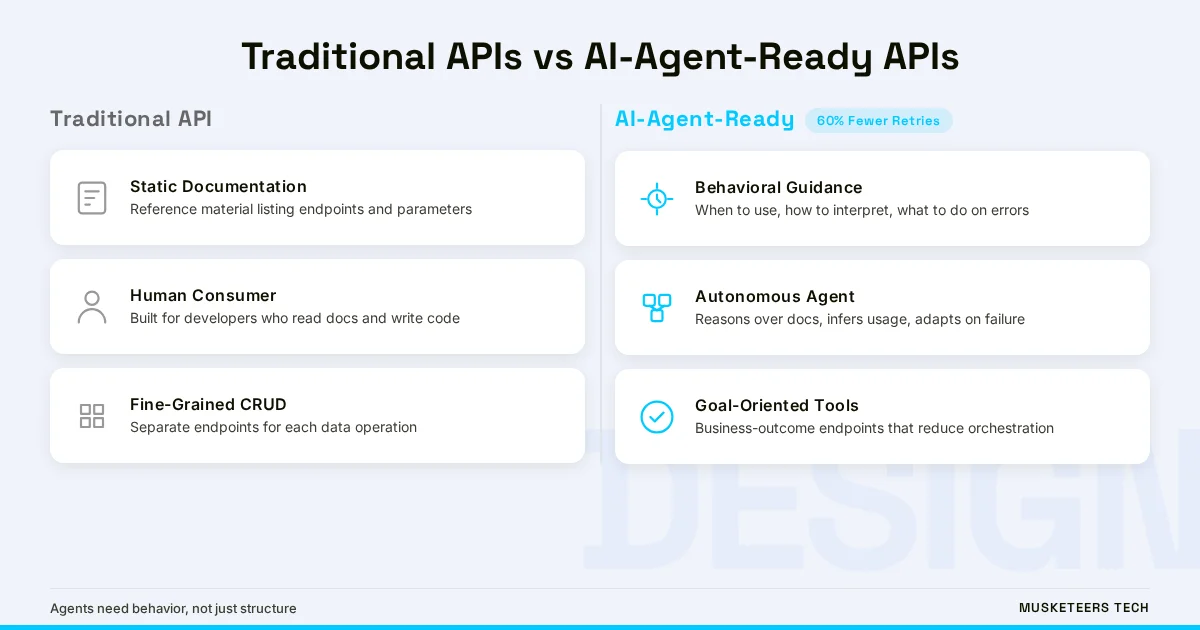

Traditional APIs vs AI-Agent-Ready APIs: Key Differences

Understanding the gap between conventional API design and what AI agents require is the first step toward building systems that serve both audiences. The differences span every layer of the stack, from documentation to error handling to response semantics.

| Aspect | Traditional API Design | AI-Agent-Ready Design |

|---|---|---|

| Primary consumer | Human developer or UI component | Autonomous AI agent or LLM |

| Documentation role | Reference material for developers | Behavioral guidance that shapes agent decisions |

| Interface granularity | Fine-grained CRUD endpoints | Goal-oriented, task-centric operations |

| Data output | Raw JSON records | Semantically enriched responses with context |

| Error handling | HTTP status codes | Detailed machine-readable remediation instructions |

| Integration flow | Service chaining by developers | Declarative, knowledge-driven orchestration |

| Security model | API keys and OAuth for humans | Fine-grained, role-based agent permissions |

The most important shift is from structure-first to behavior-first design. Traditional APIs document what an endpoint does. Agent-ready APIs document when to use it, how to interpret its responses, and what to do when something goes wrong. This behavioral layer is what separates APIs that agents can reliably use from those that cause expensive failure loops.

What AI Agents Need From Your APIs

AI agents operating autonomously require three things that most existing APIs fail to provide adequately: clarity, context, and semantic consistency. Understanding these requirements is essential for any CTO planning an ai agent architecture strategy.

Clarity: Reduce Cognitive Load

Agents should not need to reverse-engineer business logic from multiple microservice endpoints. Consider a scenario where an agent needs to determine a customer’s eligibility for a credit offer. In a traditional microservices setup, the agent must call four separate endpoints — customer profile, account status, payment history, and eligibility rules — then stitch these responses together. Each additional API call introduces latency, increases the risk of failure, and forces the agent to orchestrate logic that should be the API’s responsibility.

The solution: expose high-level, intent-aligned endpoints that encapsulate domain logic. A single GET /customerEligibilityStatus that returns a complete, actionable response eliminates orchestration complexity and lets the agent act rather than analyze.

Context: Embed Meaning in Responses

Even when an agent receives correct data, raw numbers without interpretation are nearly useless for autonomous decision-making. An API response showing creditScore: 720 gives an agent a data point. An enriched response that adds creditScoreInterpretation: "Good — eligible for standard rates" and nextAction: "proceed_to_offer_selection" gives the agent both the data and the reasoning to take intelligent action.

Semantic Consistency: Maintain Stable Contracts

Agents rely on predictable data structures to build reliable workflows. In microservice environments, frequent schema changes or inconsistent field naming across endpoints create cascading failures. An agent that encounters a field named user_id in one endpoint and userId in another will waste tokens debugging a non-problem. Consistent naming conventions, stable schemas, and clear versioning policies are not just developer conveniences — they are operational necessities for AI agent integration.

- Expose intent-aligned, high-level endpoints that reduce multi-call orchestration for agents

- Enrich API responses with semantic metadata, interpretations, and suggested next actions

- Maintain strict naming conventions and schema governance across all endpoints

- Version APIs clearly and communicate deprecation timelines to prevent agent workflow disruption

- Return minimal, relevant payloads to reduce token consumption and improve response latency

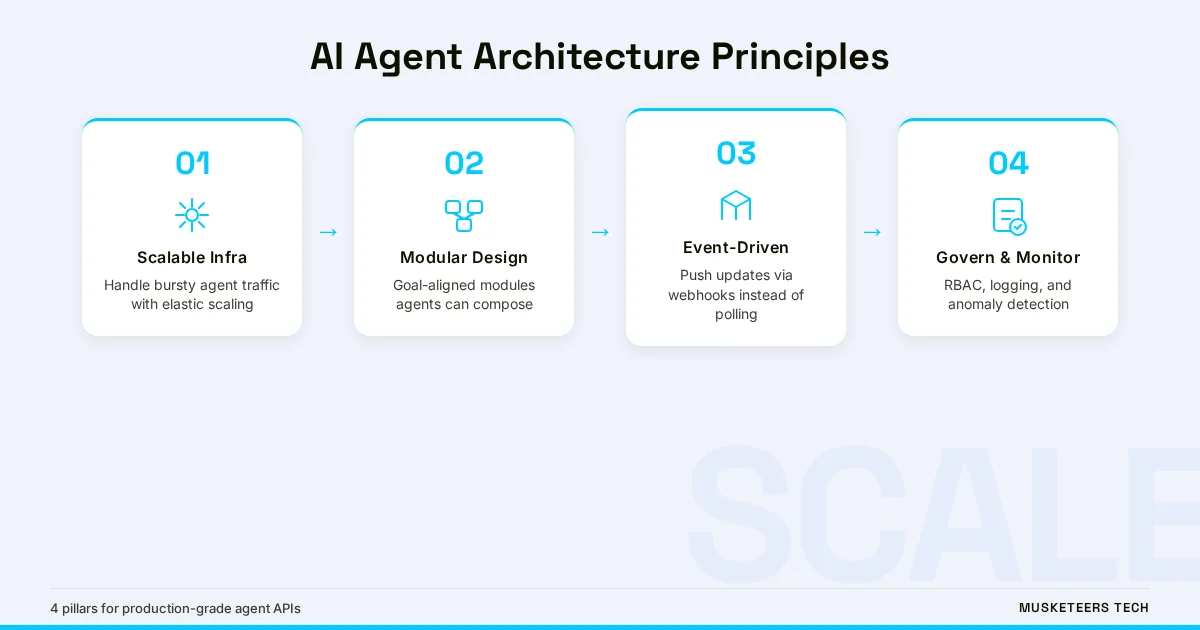

Core Architecture Principles for AI Agent Integration

Building an ai agent development platform that scales requires architectural decisions that go beyond individual endpoint design. These principles address how your entire system should be structured to support agentic workloads.

Scalability for Bursty Agent Traffic

AI agents behave differently from human users. A single agent can generate hundreds of API calls in minutes — far exceeding typical human interaction patterns. Your infrastructure must handle these bursty, high-volume request patterns without degrading performance for other consumers. Horizontal scaling, intelligent load balancing, and queue-based request prioritization become essential rather than optional.

Modularity with Agent-Friendly Abstractions

A modular architecture enables independent updates without disrupting agent workflows, but the abstraction layer matters. Plugin systems and function-calling frameworks allow APIs to grow without unnecessary complexity. The key is designing modules that align with business outcomes rather than technical operations — agents reason about goals, not database queries.

Event-Driven Responsiveness

Modern AI systems benefit from event-driven architectures that support real-time responsiveness. Instead of requiring agents to poll endpoints repeatedly, APIs can push relevant updates through webhooks or event streams. Standards like AsyncAPI help document these event-driven interfaces, much as OpenAPI does for REST endpoints. This reduces unnecessary traffic and gives agents timely data to reason with.

Microservices Trap

Don’t drop AI agents into a dense jungle of fragmented microservices. Agents need clarity, not a hundred granular endpoints. Rationalize your API surface area before enabling agent access — consolidate around domain-centric interfaces and standardize schema governance first.

The Role of MCP, OpenAPI, and Emerging Standards

Protocol standardization is the foundation that makes scalable AI agent integration possible. Three standards matter most in 2026, and CTOs should understand where each fits in their stack.

OpenAPI Specification: The Foundation

A validated, up-to-date OpenAPI specification is table stakes for agent readiness. LLMs rely on descriptive specifications — not just structure, but context-rich descriptions of what each endpoint does and when to use it. The challenge is API drift: 75% of production APIs have endpoints that don’t match their specs, according to APIContext. Implementing OpenAPI directly into your development workflow with strict schema validation is the only way to maintain the consistency agents require.

Model Context Protocol (MCP): The Agent Interface Layer

MCP goes beyond specs to provide semantics for autonomous agents. Where OpenAPI tells an agent what your API does, MCP tells it when and how to use your API in context. MCP servers expose chunky, goal-oriented tools that combine multiple endpoints to achieve specific business outcomes rather than exposing raw CRUD operations.

The adoption pattern is clear: companies that wrap their APIs in MCP servers gain discoverability in agentic workflows. Converters like Speakeasy’s Gram and Tyk’s API-to-MCP tool can transform any OpenAPI specification into an MCP server, reducing the implementation barrier significantly.

Defines endpoints, methods, schemas, parameters, and authentication. Serves as the source of truth for API structure. Essential for both human developers and AI agent comprehension. Requires ongoing maintenance to prevent API drift.

LLM-Optimized Metadata

Adding an llms.txt file to your website’s root directory provides metadata that helps LLMs with discovery and site traversal. Over 600 organizations now use llms.txt files, including Anthropic, Cursor, and Perplexity. While its long-term adoption is debated, it represents a low-effort strategy to improve agent discoverability of your documentation and API resources.

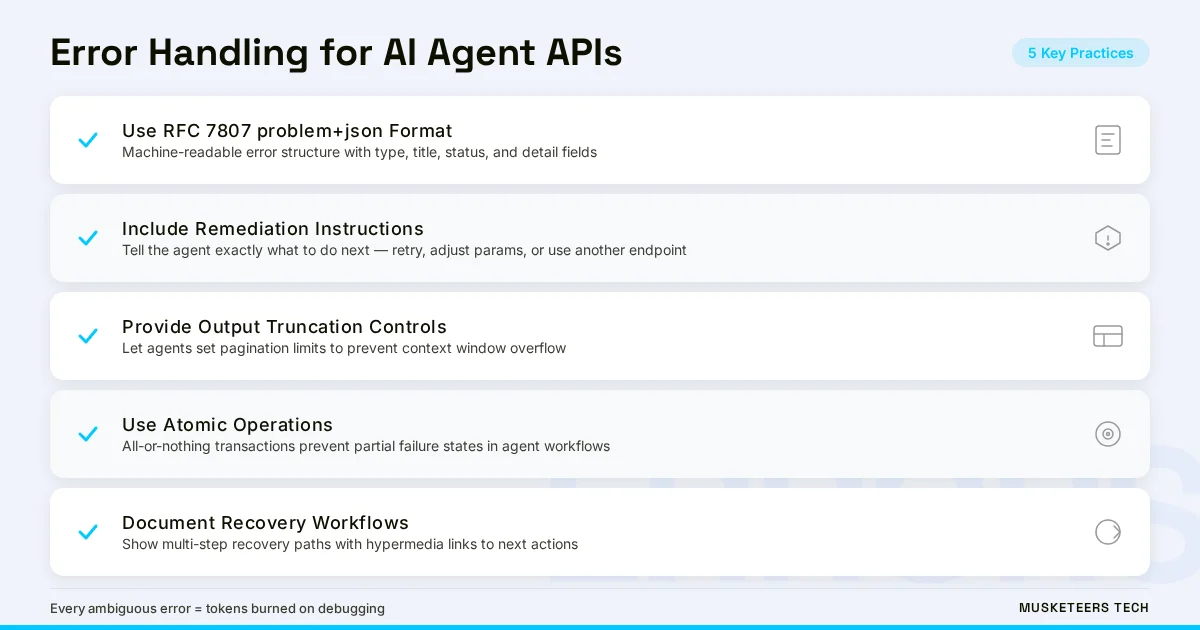

Error Handling and Resilience for Agentic Systems

When an AI agent hits an error, it doesn’t open a support ticket. It either retries, hallucinates a workaround, or abandons your API entirely. The quality of your error responses directly determines which of these outcomes occurs — and the financial impact is measurable in token costs and lost integrations.

Machine-Readable Error Responses

Standard HTTP status codes are insufficient for AI agents. Each error response should include the error definition, the recommended next action, and examples of common failure cases. Adopting the application/problem+json media type (IETF RFC 7807) provides a standardized, machine-friendly format that agents can parse and act on programmatically.

Building Resilience to Probabilistic Behavior

LLMs are inherently probabilistic, which means agents can behave unpredictably in long-running tasks. Resilient API design includes output truncation controls (like pagination limits agents can specify), atomic operations that prevent partial-failure states, and clear empty-response semantics that distinguish “no results found” from “something went wrong.” These features act as shock absorbers, preventing agents from being overwhelmed by data or getting stuck in expensive retry loops.

Documenting Workflows and Recovery Paths

Beyond individual error responses, documenting common multi-step workflows helps agents understand operational context. Showing how to combine endpoints to achieve business outcomes reduces hallucinated approaches and brings determinism to interlinked API flows. Hypermedia-style responses that include links to related actions give agents a structured awareness of what steps are possible next.

Pro Tip

Every time an agent encounters an ambiguous error from your API, that’s tokens burned on debugging. Investing in detailed, machine-readable error messages has a direct ROI: fewer retries, lower token costs for consumers, and higher agent adoption rates.

Security, Authentication, and Governance for AI Agent APIs

AI agents introduce security challenges that extend well beyond traditional API protection. Increased autonomous traffic amplifies existing vulnerabilities while creating new attack surfaces that require purpose-built defenses.

Authentication for Non-Human Consumers

Standard OAuth 2.0 and OpenID Connect flows remain the recommended authentication approach — agents work best with established standards. Deviating from common web authentication patterns makes your API harder for agents to integrate with. API keys should never be hardcoded; agents must be designed to use environment variables or secret vaults, with regular key rotation enforced programmatically.

Fine-Grained Agent Permissions

Not every agent needs full API access. Role-based access control should distinguish between agent types: a read-only information retriever needs different permissions than a planning agent with write access. Fine-grained scoping prevents overprivileged agents from taking unintended actions and limits the blast radius of compromised credentials.

Traffic Control and Abuse Prevention

Agentic AI traffic is inherently bursty and unpredictable. Dynamic rate limiting, concurrency caps, and tiered quotas help separate AI traffic from human usage. Providing bulk operation endpoints and asynchronous processing options reduces strain from agents that would otherwise loop through repetitive individual calls. Logging and monitoring all agent interactions is critical for detecting anomalous behavior early.

Data Privacy and Ethical Considerations

APIs serving AI agents must collect only essential data, delete it promptly or anonymize it when storage is needed, and clearly document data handling policies. As autonomous agents increasingly train on and reason with API data, transparency in sourcing and fairness in algorithmic decisions become operational requirements, not just compliance checkboxes.

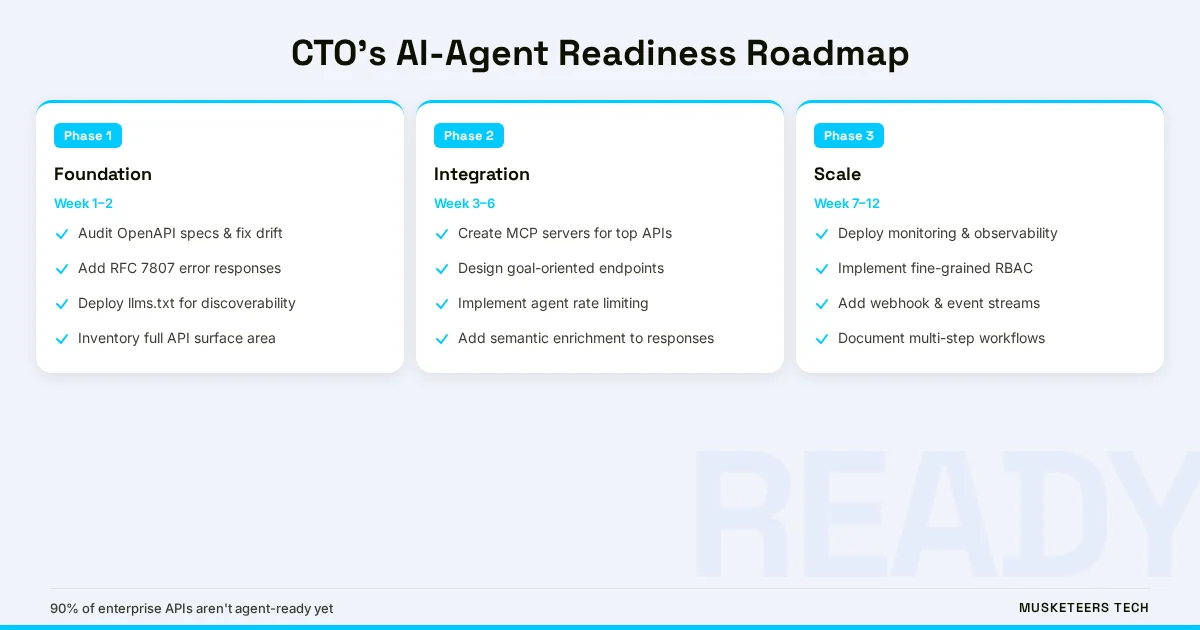

A CTO’s Readiness Checklist: Is Your System AI-Agent-Ready?

This is where theory meets your Monday morning. Use this checklist to assess your current API landscape and prioritize the highest-impact improvements for ai integration with agents.

Start with the basics that deliver the most immediate value:

- Audit your OpenAPI specs — Compare every production endpoint against its specification. Fix drift. Add context-rich descriptions to every parameter and response field

- Implement structured error responses — Adopt RFC 7807 (problem+json). Include remediation instructions in every error response

- Add an llms.txt file — Low effort, immediate discoverability improvement for AI crawlers

- Inventory your API surface — Document every public and web API, including those you consider “internal”

Frequently Asked Questions

A traditional API is designed for human developers or predictable scripts — it documents what endpoints do and relies on developers to orchestrate calls correctly. An AI agent API adds a behavioral layer that tells autonomous systems when to use each endpoint, how to interpret responses, and what to do when errors occur. This includes semantic enrichment of responses, machine-readable error remediation, and goal-oriented endpoint design that reduces the need for multi-call orchestration.

How Musketeers Tech Can Help

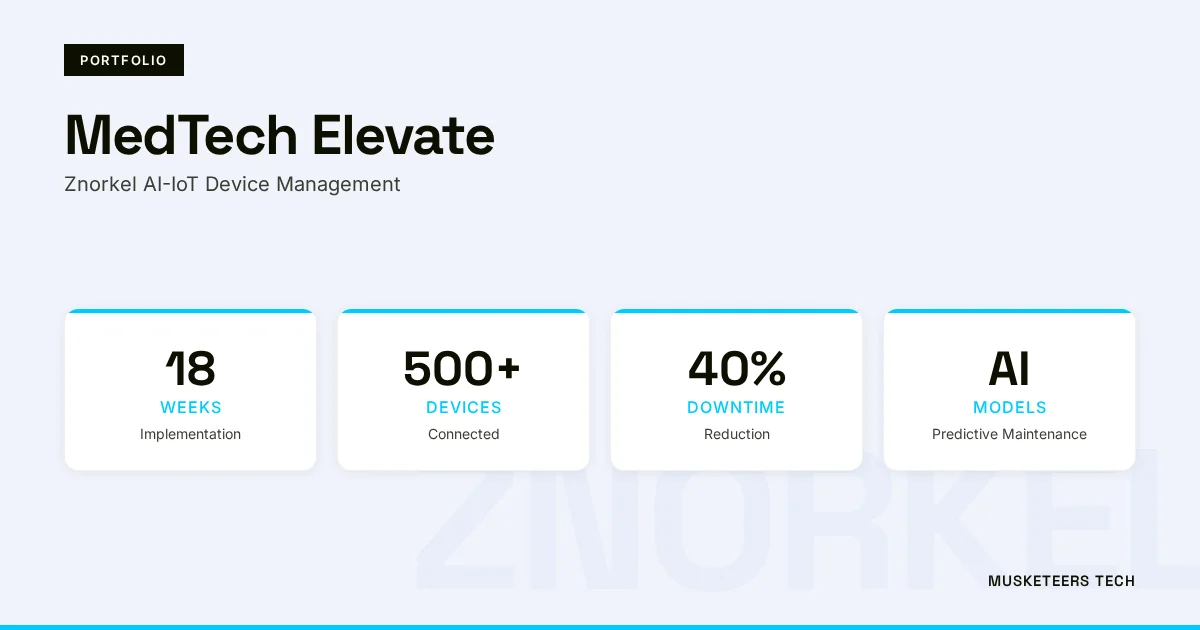

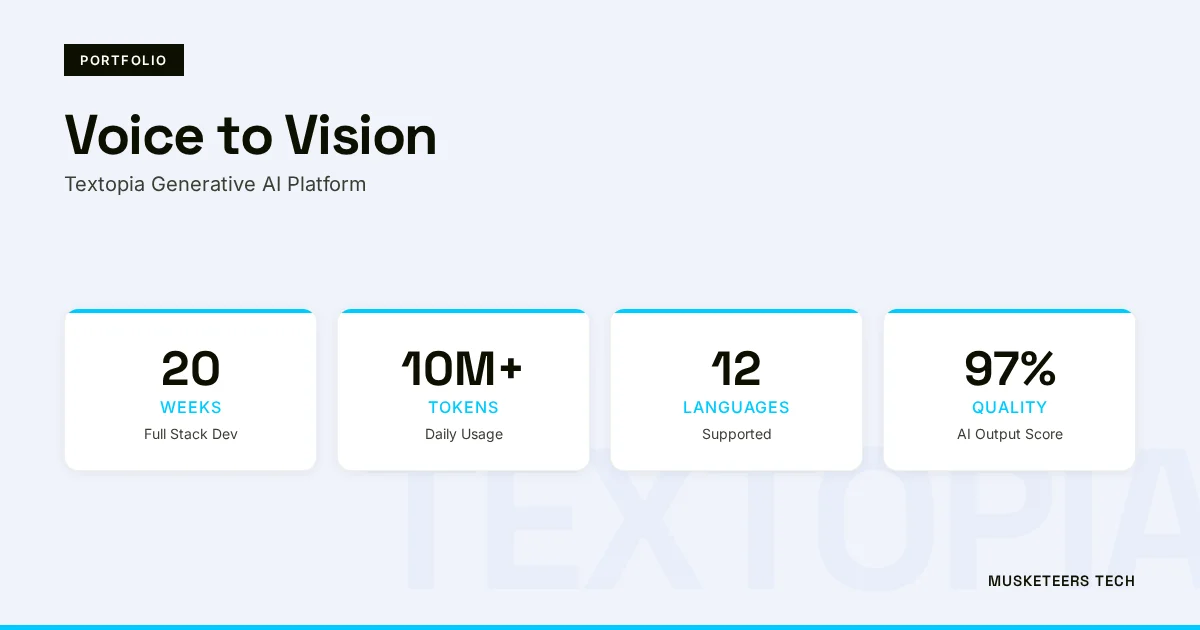

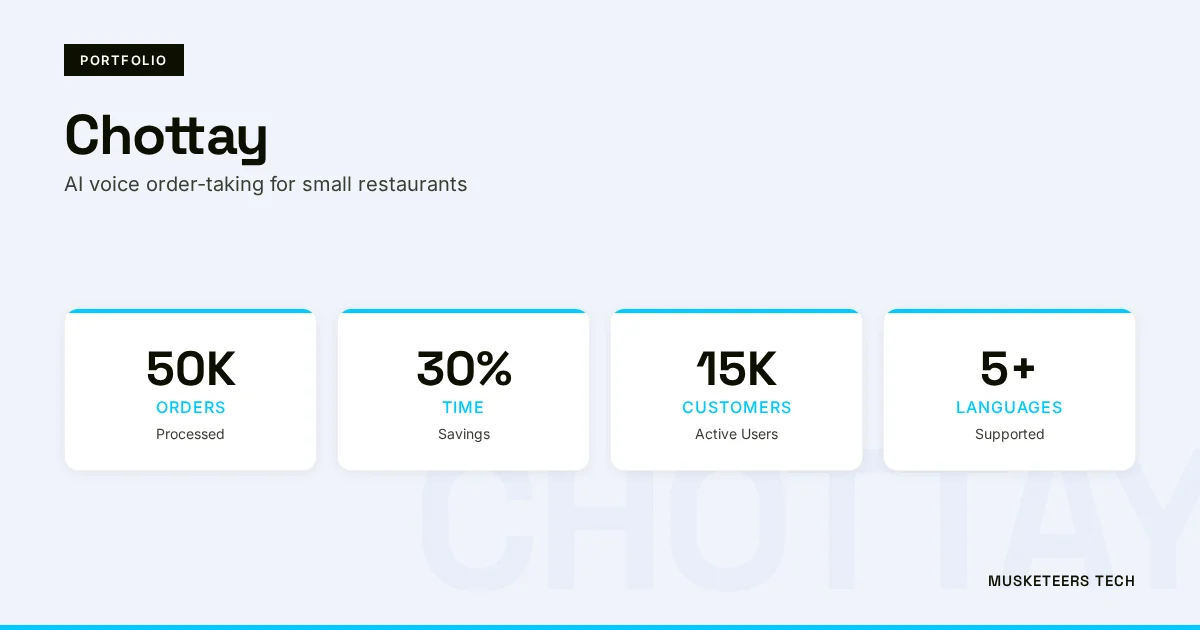

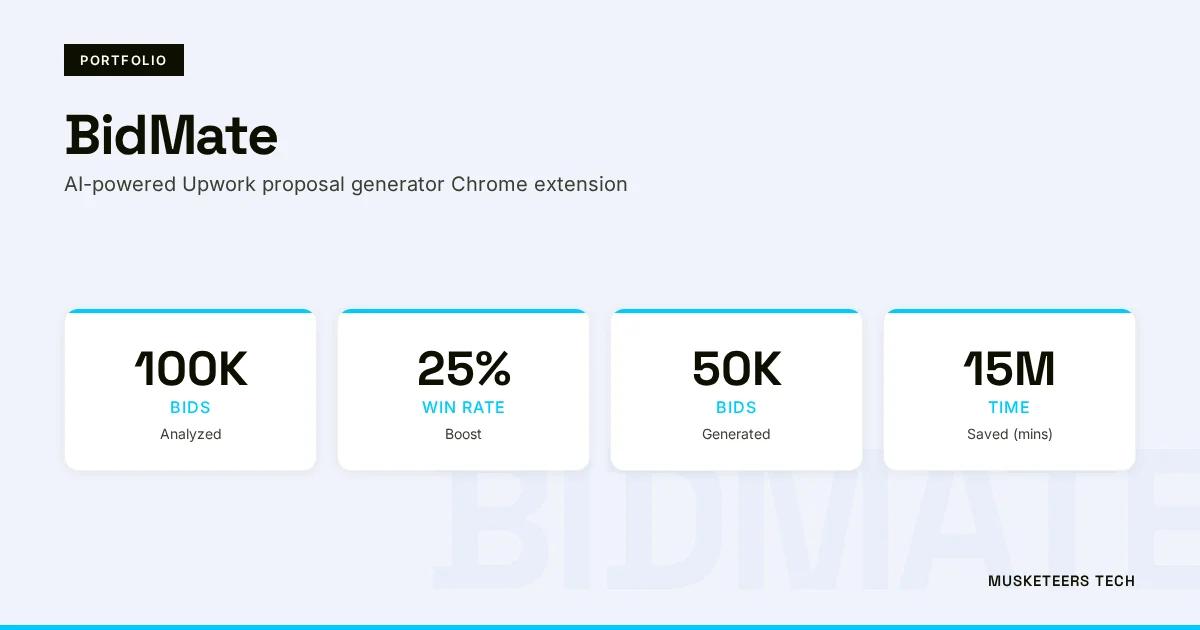

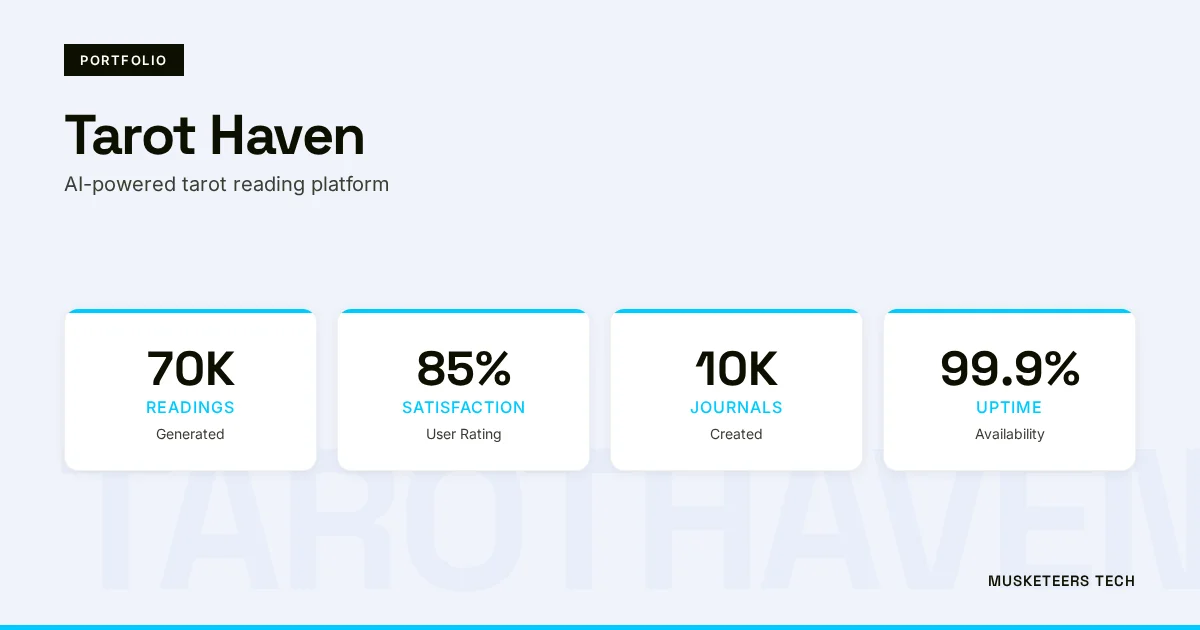

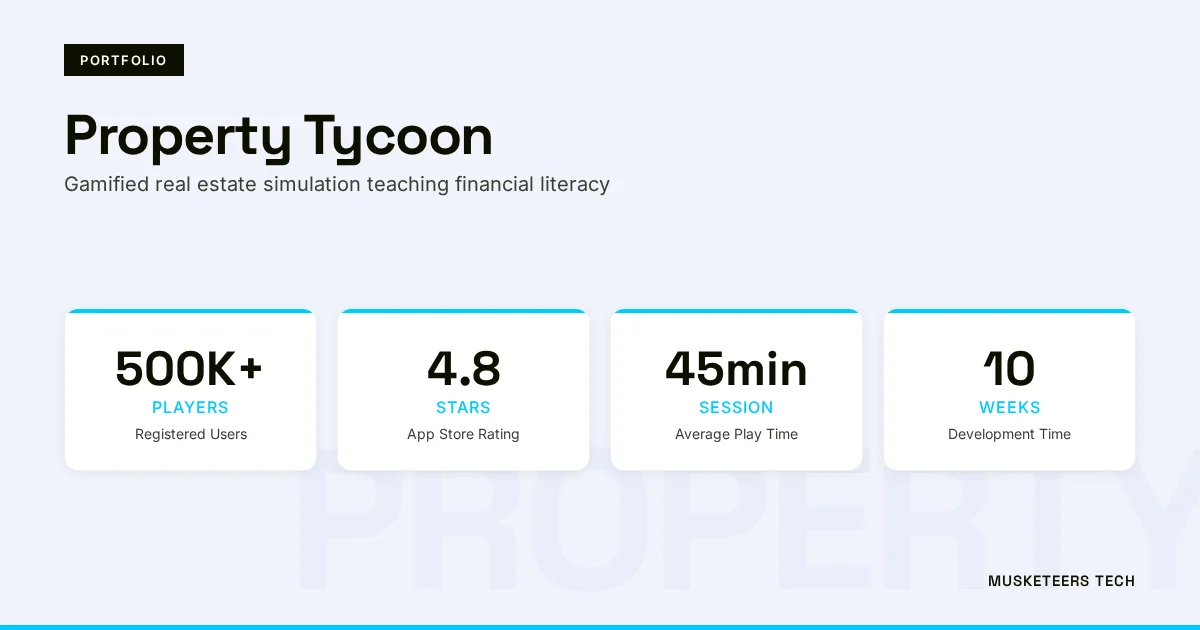

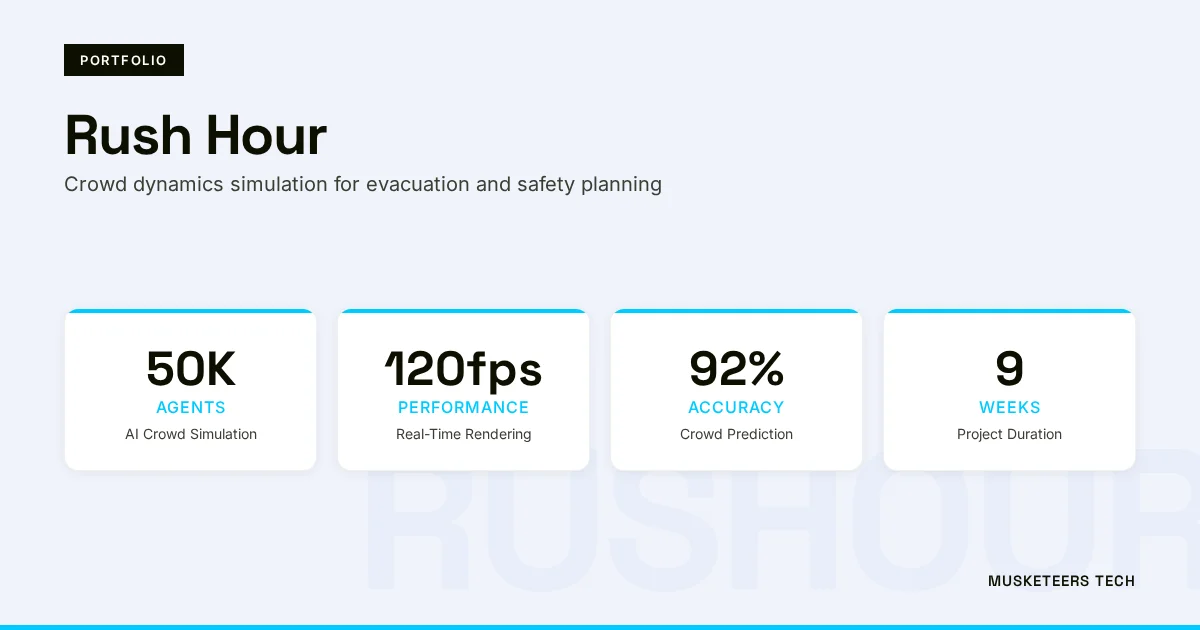

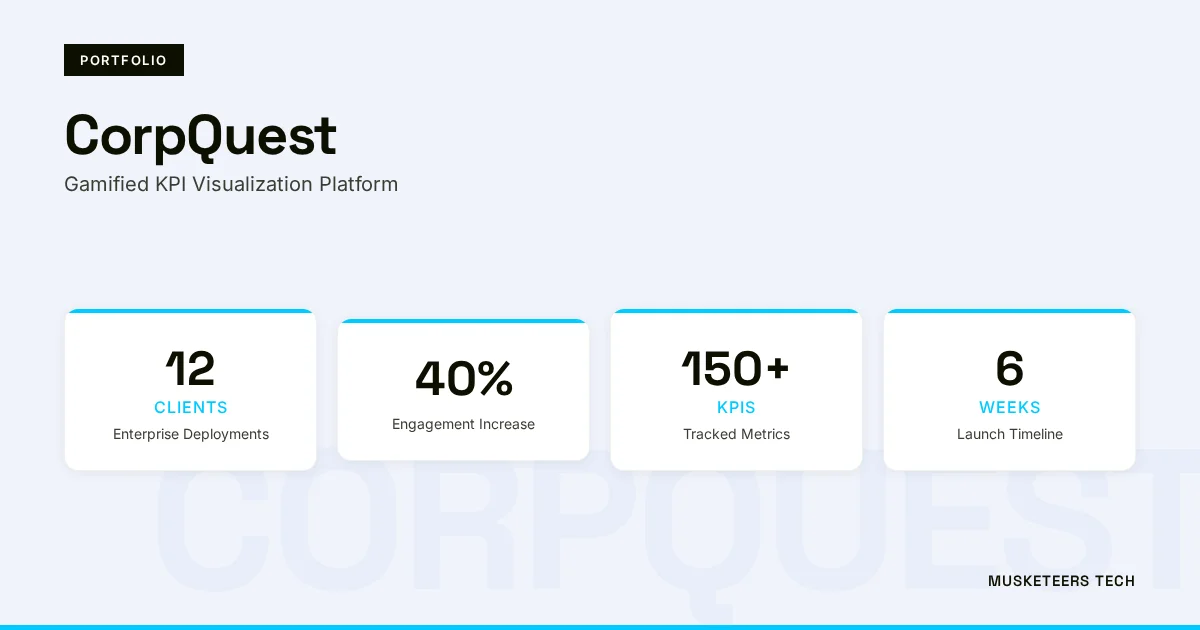

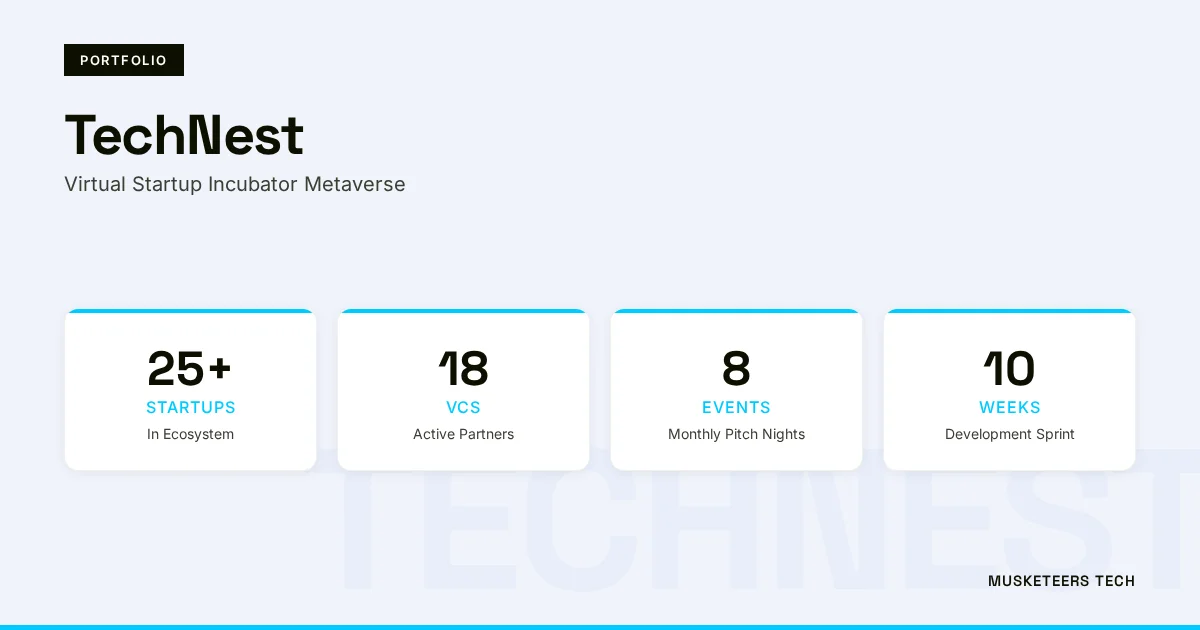

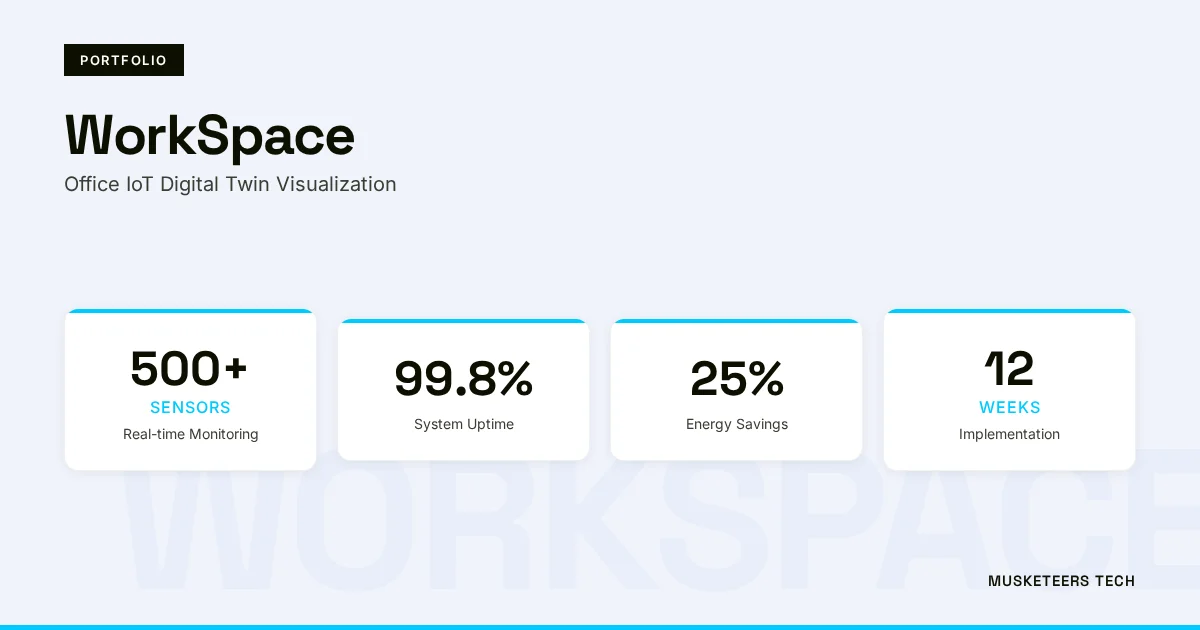

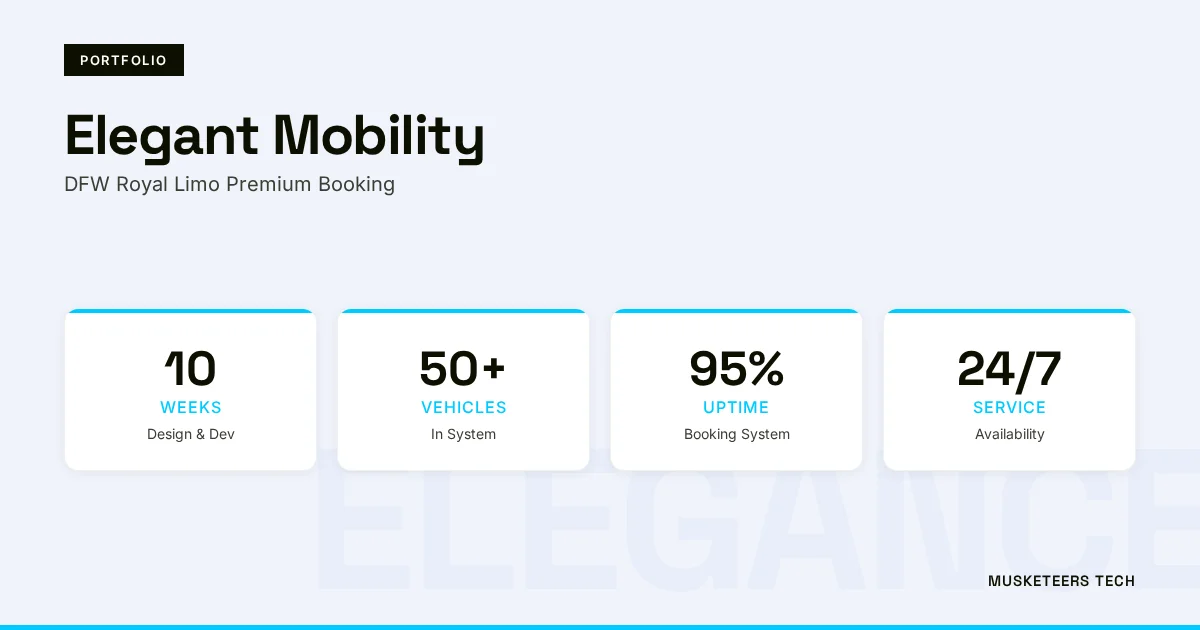

At Musketeers Tech, we help CTOs and engineering teams design, build, and deploy AI-agent-ready systems from the ground up. Our AI Agent Development team specializes in building autonomous agent architectures with proper API design, MCP integration, and production-grade governance. We have delivered agent-powered solutions across industries, from AI voice order-taking systems for restaurant automation to AI-powered proposal generators that integrate with complex third-party APIs.

Whether you need a technology strategy consultation to assess your API readiness, a fractional CTO to guide your agentic transformation, or a full generative AI application built to serve both human users and AI agents, our team has the experience to deliver. We don’t just wrap APIs in MCP and call it done — we architect systems where agents operate as capable, reliable partners in your business workflows.

AI Agent Development

Build autonomous AI agents with production-grade API design, MCP integration, and enterprise governance.

CTO as a Service

Get strategic technology leadership to guide your API modernization and agentic AI transformation.

Final Thoughts: Design for Understanding, Not Just Access

The shift from human-first to agent-first API design is not a future trend — it is happening right now. Companies that make their APIs machine-legible, semantically rich, and resilient to probabilistic behavior will capture the fastest-growing consumer segment in the API economy. Those that don’t will find their services bypassed as agents route traffic to competitors who made themselves easier to work with.

The good news: you don’t need to rebuild everything at once. Start with your OpenAPI specs, layer on MCP, and invest in structured error handling. These three moves alone put you ahead of 90% of enterprise APIs currently in production.

The agentic era rewards clarity over complexity, context over raw data, and understanding over mere access. The CTOs who internalize this principle will build the platforms that AI agents — and the businesses behind them — choose to depend on. For more on building AI-powered systems, explore our guide on how AI agents are revolutionizing customer support and the transformative impact of cloud computing on scalable agent architectures.

AI-optimized version of this article: Read the text-only version

Last updated: 31 Mar, 2026